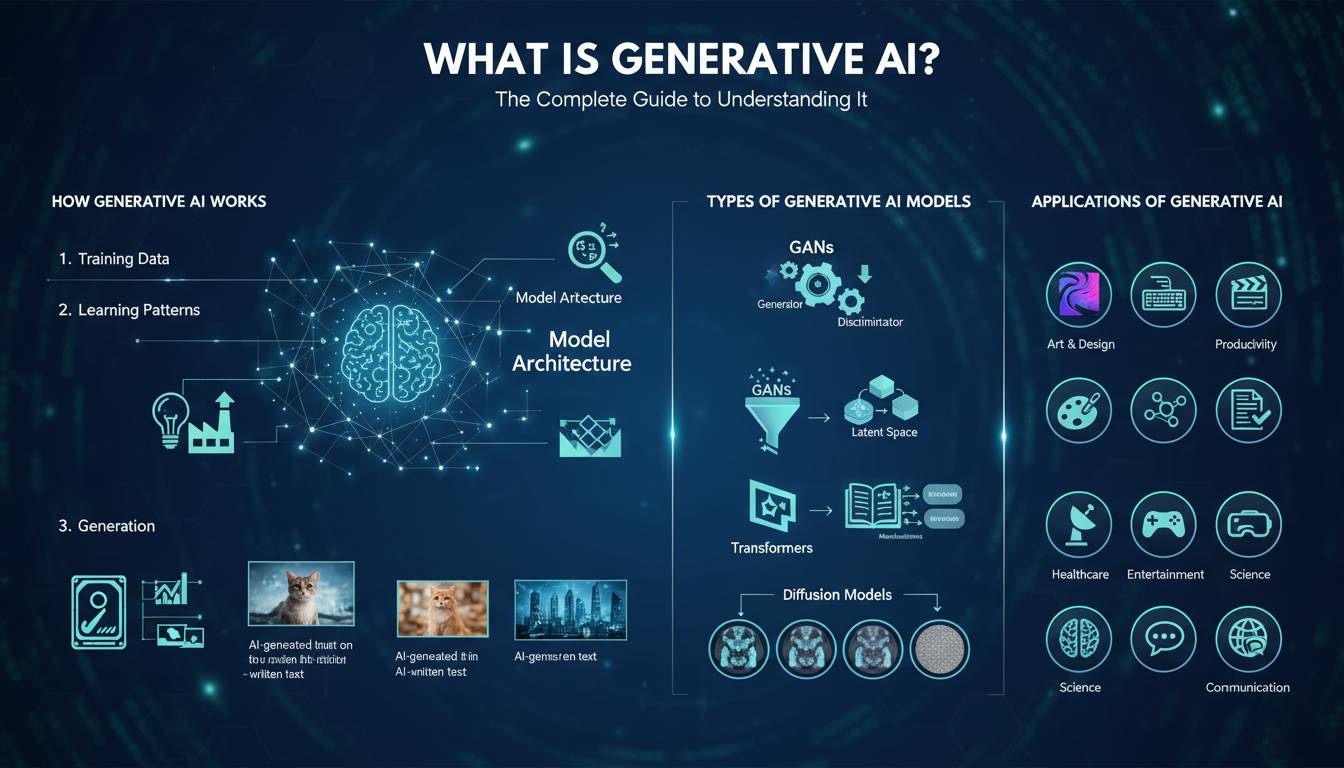

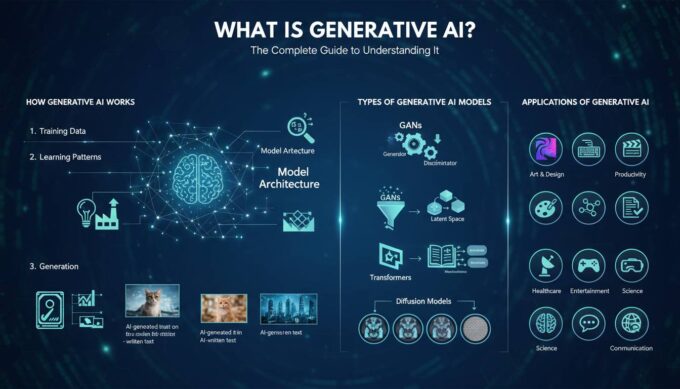

Generative AI refers to artificial intelligence systems that can create new content—including text, images, audio, video, and code—by learning patterns from existing data. Unlike traditional AI that classifies or analyzes information, generative AI produces original outputs that didn’t exist before. This technology has transitioned from research labs to mainstream adoption in under two years, fundamentally changing how individuals and businesses approach creative and analytical tasks.

Understanding Generative AI: Definition and Core Concepts

At its foundation, generative AI uses machine learning models—particularly large language models (LLMs) and diffusion models—to understand and recreate patterns found in training data. These systems don’t simply memorize and repeat information; they identify statistical relationships within massive datasets and use that understanding to generate novel content that resembles what they’ve learned.

The distinction between discriminative and generative AI provides essential context. Discriminative models, which dominated AI development for years, excel at classification tasks: distinguishing spam from legitimate emails, identifying objects in photos, or predicting whether a loan applicant will default. Generative models, by contrast, learn the underlying distribution of data and can sample from that distribution to create new instances.

Key Insights

– Generative AI models learn probability distributions, not exact replicas

– The quality of output depends heavily on training data quality and model architecture

– Modern generative AI can combine multiple modalities (text + image + audio)

– These systems exhibit emergent properties not explicitly programmed

The technology’s rapid advancement stems from three converging factors: the availability of massive datasets, increased computational power through graphics processing units (GPUs), and architectural innovations like the transformer model introduced in 2017. Research from Stanford University indicates that AI research publications increased 300% between 2010 and 2022, with generative AI specifically accounting for a significant portion of that growth.

How Generative AI Works: Technical Foundations

Understanding how generative AI operates requires examining both the training phase and the inference phase. During training, models exposure to vast amounts of data—billions of web pages, images, and other content—allowing them to learn statistical patterns. The model adjusts internal parameters to minimize the difference between its predictions and actual data, a process called gradient descent.

Transformer architecture powers most modern language models. Unlike earlier recurrent neural networks that processed text sequentially, transformers process entire sequences simultaneously using attention mechanisms. This parallel processing enables training on datasets impossible for earlier architectures. The attention mechanism specifically allows models to weigh the importance of different parts of input data, capturing context and relationships across long distances in text.

📊 TECHNICAL COMPARISON

| Architecture | Processing Type | Best For | Limitations |

|---|---|---|---|

| Transformer | Parallel | Text, code, images | High compute needs |

| Diffusion | Iterative denoising | High-quality images | Slow generation |

| GAN | Adversarial | Style transfer | Mode collapse |

| VAE | Latent space | Compression | Blurry outputs |

Large Language Models like GPT-4 and Claude use decoder-only transformer architecture, predicting the next token in a sequence. When generating text, the model considers all previous tokens and calculates probability distributions over possible next words, sampling from these distributions to produce output. This process repeats thousands of times to generate complete responses.

Diffusion models, dominant in image generation, work through a reverse denoising process. Starting from random noise, the model progressively removes noise by learning to predict what the original image looked like. Stable Diffusion, DALL-E 3, and Midjourney all employ variations of this approach, achieving photorealistic image generation that has sparked both excitement and concern about synthetic media.

Types of Generative AI Models

The generative AI landscape encompasses multiple model types, each excelling at different content creation tasks. Understanding these categories helps users select appropriate tools for specific needs.

Large Language Models (LLMs)

LLMs specialize in text generation and conversation. Models like GPT-4, Claude 3, Gemini, and Llama 3 demonstrate remarkable capabilities across writing, analysis, coding, and reasoning tasks. These models typically contain billions of parameters—GPT-4 reportedly has approximately 1.7 trillion parameters—enabling nuanced understanding and generation of human language.

Leading LLMs by Capability:

– GPT-4 (OpenAI): Strongest overall reasoning, tool use, and multi-modal inputs

– Claude 3 (Anthropic): Exceptional long-context handling, nuanced safety alignment

– Gemini (Google): Native multi-modal architecture, deep Google ecosystem integration

– Llama 3 (Meta): Open-source option with strong performance-to-cost ratio

Image Generation Models

Image generation has progressed from abstract patterns to photorealistic outputs in just a few years. Diffusion models dominate this space, with Midjourney, DALL-E 3, and Stable Diffusion offering distinct capabilities. These models can generate images from text descriptions (text-to-image), modify existing images (image-to-image), or create variations of provided images.

Audio and Video Generation

Emerging capabilities include text-to-speech systems like ElevenLabs and Suno AI’s music generation, which creates complete songs from text prompts. Video generation models from OpenAI (Sora), Runway, and others demonstrate ability to create multi-second videos from descriptions, though this technology remains less mature than text and image generation.

Code Generation Models

Specialized models like GitHub Copilot, Amazon CodeWhisperer, and Claude Code assist developers by suggesting code completions, writing functions from descriptions, and debugging existing code. These tools have demonstrated measurable productivity improvements, with studies showing developers complete tasks 25-55% faster when using AI-assisted coding.

Real-World Applications and Use Cases

Generative AI’s practical applications span virtually every industry, transforming workflows and creating new possibilities. Examining concrete use cases reveals both the technology’s current capabilities and its limitations.

Enterprise Applications

Customer Service: Companies deploy AI chatbots for 24/7 customer support, handling routine inquiries while escalating complex issues to human agents. Shopify reports that AI assistants resolve 70% of customer messages without human intervention.

Content Creation: Marketing teams use generative AI for drafting blog posts, social media content, and advertising copy. While human oversight remains essential for accuracy and brand voice, these tools accelerate production cycles significantly.

Software Development: Beyond code completion, developers use AI for documentation generation, code review suggestions, and refactoring. Microsoft reports that GitHub Copilot users accept AI suggestions approximately 30% of the time, with accepted suggestions averaging 40-50% of completed code in supported languages.

Healthcare Applications

Medical researchers apply generative AI to drug discovery, using models to predict molecular properties and generate novel compound candidates. Insilico Medicine has used AI to identify new drug candidates in months rather than years, demonstrating potential for accelerating pharmaceutical development. Additionally, AI systems assist medical documentation, converting patient conversations into structured clinical notes.

Creative Industries

Writers, artists, and musicians increasingly incorporate AI tools into creative workflows. While debates continue about authorship and creativity, many professionals view generative AI as a collaborative tool—handling initial drafts or generating variations that human creators refine. Adobe’s Firefly integration into Creative Cloud illustrates this hybrid approach, offering AI features within professional creative software.

Benefits and Limitations: An Honest Assessment

Realistic assessment of generative AI requires acknowledging both substantial benefits and meaningful limitations. Overhyping capabilities leads to disappointment and skepticism; dismissing the technology ignores demonstrated impact.

Documented Benefits

| Benefit | Measured Impact | Source |

|---|---|---|

| Productivity gains | 25-55% faster task completion | GitHub/Sourcegraph studies |

| Content velocity | 10x more first-draft content | Content marketing benchmarks |

| Accessibility | Real-time translation accuracy | WEF AI adoption report |

| Cost reduction | 30-60% cost savings in eligible tasks | McKinsey 2023 analysis |

Generative AI excels at synthesizing information, generating first drafts, and handling repetitive tasks. It democratizes capabilities previously requiring specialized expertise—enabling individuals without professional design skills to create visuals, or non-programmers to write functional code for simple applications.

Genuine Limitations

However, significant constraints exist. Models frequently produce incorrect information presented with high confidence—a phenomenon researchers call “hallucination.” Training data cutoffs mean models lack awareness of events after their training period. Biases present in training data can manifest in outputs, requiring careful oversight.

Research from Vectara indicates that even the best models hallucinate approximately 3-5% of the time in controlled benchmarks, though real-world rates may vary significantly by task. Furthermore, these models lack true understanding—they pattern-match without comprehension, meaning outputs may be superficially correct while containing logical errors invisible to casual review.

Critical Considerations:

– Always verify factual claims from authoritative sources

– Maintain human oversight for consequential decisions

– Understand that AI augments human capability rather than replacing judgment

– Consider copyright and intellectual property implications of training data

The Future of Generative AI

The trajectory of generative AI points toward increased capability, accessibility, and integration. Several trends shape the technology’s evolution.

Multi-modal models that seamlessly handle text, images, audio, and video within unified frameworks represent a major development direction. OpenAI’s GPT-4V, Google’s Gemini, and Anthropic’s Claude demonstrate native multi-modal capabilities, enabling more natural human-AI interaction.

Agentic AI—systems that can take autonomous actions rather than simply responding to prompts—emerges as the next frontier. These systems can plan sequences of actions, use external tools, and iterate toward goals rather than producing single-shot responses. This capability shift could dramatically expand practical applications.

Enterprise adoption continues accelerating, with specialized solutions emerging for industry-specific needs. Healthcare, legal, financial services, and manufacturing sectors develop domain-optimized models that balance capability with compliance requirements.

Regulatory frameworks worldwide develop alongside the technology. The EU’s AI Act, proposed US executive orders, and various national strategies attempt to balance innovation support with risk mitigation. Organizations must navigate evolving compliance requirements while adopting these powerful tools.

Frequently Asked Questions

Is generative AI the same as artificial intelligence?

No. Generative AI is a subset of artificial intelligence focused specifically on creating new content. Traditional AI includes various approaches like machine learning, computer vision, and natural language processing that analyze, classify, or predict based on existing data. All generative AI is AI, but not all AI is generative.

Can generative AI think or understand like humans?

No. Generative AI models identify statistical patterns in data and generate outputs that resemble their training data. They lack consciousness, genuine understanding, and independent reasoning. Outputs may appear intelligent but result from pattern matching rather than comprehension. The technology produces sophisticated predictions about what text, image, or other content should follow given previous context.

Is generative AI free to use?

Several options exist across price points. OpenAI offers free tiers for ChatGPT with limitations, while full access requires paid subscriptions. Microsoft Copilot, Claude (free tier), and various other tools provide free access with varying restrictions. Enterprise versions with additional features and guarantees require business licensing.

Will generative AI replace human jobs?

Current evidence suggests generative AI transforms jobs more than eliminating them. Automation Impact research indicates that rather than fully replacing most occupations, AI automates specific tasks within jobs, shifting worker focus toward higher-value activities. Adaptation, continuous learning, and human-AI collaboration capabilities determine individual impact more than technology alone.

How accurate is generative AI?

Accuracy varies significantly by task and model. Simple factual recall often succeeds, but complex reasoning, recent events, and specialized domain knowledge frequently produce errors. Independent evaluation from Vectara and other researchers shows hallucination rates of 3-15% depending on query type. Critical evaluation and verification remain essential for any consequential application.

Is it legal to use AI-generated content?

Legal frameworks remain actively developing. Generally, using AI-generated content for draft creation, brainstorming, or assistance is permissible. Copyright implications around training data and output ownership continue being tested in courts. Commercial use policies vary by platform, and some jurisdictions require disclosure of AI-generated content in specific contexts. Legal counsel helps navigate situation-specific requirements.

Leave a comment